63 earnings calls. 0 engineering outcomes tied to AI.

We analyzed 63 recent earnings calls and found that 21 companies reported how much of their code is AI-generated, but none connected that number to any engineering outcome. Here's what they said, why the gap exists, and what your team can check this week.

.png)

HubSpot's CEO told analysts that 97% of their committed code last year was done with AI assist.

Google's CFO said about 50% of their code is written by AI agents.

Spotify's co-CEO said their best developers haven't written a single line of code since December.

These are all from earnings calls in the last four months.

I got curious about this, so I went through 63 companies' recent earnings transcripts.

21 mentioned AI for internal engineering. And the pattern was the same every time:

These companies all ship software. They all track how often they deploy, how fast they resolve incidents, how their engineering org performs. And they can all tell you what percentage of their code is AI-generated. What they can't tell you is whether the first number changed the second one.

Across all 63 companies, I found zero mentions of deployment frequency tied to AI.

Zero on MTTR.

Zero on lead time, change failure rate, or incident reduction connected to AI tooling.

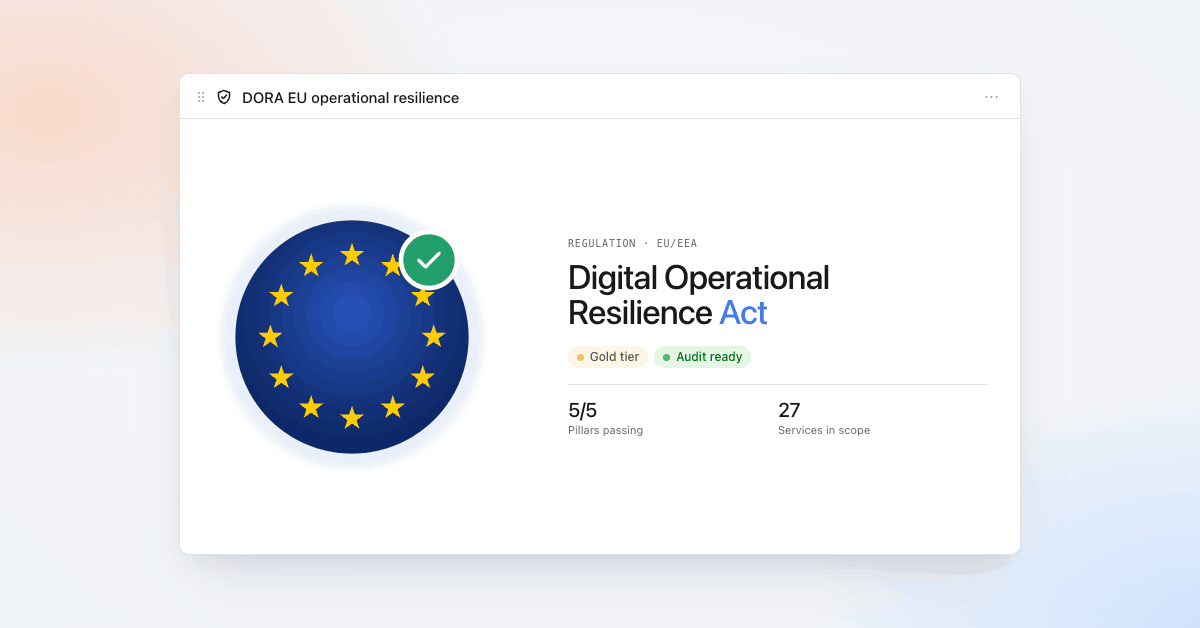

The metrics DORA has tracked for a decade as the standard for engineering performance. They exist. The AI number exists. On earnings calls, the two just never appear in the same sentence.

Airbnb's Brian Chesky said it himself on the call. He noted that getting to 100% AI tool adoption would be a "vanity metric." The real question, he said, is whether the organization adapts fast enough to use those tools well.

I think he's right. But even Airbnb reported the adoption number without connecting it to any engineering outcome.

Individual output is up. Organizational output isn't.

Faros AI looked at telemetry from 10,000 developers across 1,255 engineering teams. Not surveys, actual data from source control, CI/CD, and task trackers. Individual output was up 21%. PRs merged up 98%. But PR review times grew 91%. PR sizes grew 154%.

Net organizational delivery velocity: flat.

Engineers are producing more code. Organizations aren't shipping faster. The bottleneck moved from writing code to reviewing, testing, and integrating it.

The companies that could connect AI to outcomes had one thing in common

Some companies did give outcome numbers on earnings calls. Pfizer: $600 million saved through Golden Batch AI in manufacturing. Amazon: Q Developer migrated 30,000 Java applications, saving $260 million a year, with 79% of auto-generated code shipped without human changes.

Those companies could draw the line between AI and outcomes because they were already tracking the specific metric AI was going to change. Pfizer had years of batch data (yield, cycle time, cost-per-unit) for the exact process Golden Batch would optimize. Amazon had a clear before (50 developer-days per Java migration) and a clear after (a few hours).

For engineering, this is genuinely harder. Manufacturing batches are comparable by design: same inputs, same process, same expected output. Engineering work isn't. Every feature has a different scope. Every sprint has different complexity. If your deployment frequency went up 30% after rolling out AI tools, it could be the AI. It could also be that you shipped a simpler roadmap that quarter, or hired three seniors, or finally fixed the CI pipeline that was slowing everyone down. Isolating what AI actually changed from everything else that changed at the same time is a real problem, and most engineering orgs haven't solved it.

That's why the claims that do exist tend to fall apart. IBM claimed 45% productivity gains from Project Bob across 20,000 developers. When you look at their own published research, the data was self-reported. Not what people shipped. What they said it felt like.

Spotify said their best engineers stopped writing code. That tells you how the workflow changed. It doesn't tell you whether Spotify ships more reliably or resolves incidents faster.

Don't just report AI adoption. Connect it to something.

The code percentages are going to keep climbing. They'll reach 80, 90% at most companies within a year or two. At that point the number alone stops being interesting, the same way "we use cloud" did a decade ago.

The company that connects the AI number to an engineering outcome — "50% of our code is AI-generated, and our deployment frequency increased 30% over the same period" — will stand out from the nine companies that can only report the first half.

For engineering broadly, that connection still hasn't been made on any earnings call we looked at. The engineering org that makes it first will be the one everyone else gets compared to.

What you can check this week

If your CEO gets asked about engineering AI on the next earnings call, could your team connect the AI adoption number to an engineering outcome?

Three things worth looking at:

Do you have a before number for the workflows AI is supposed to improve? If you deployed AI coding tools six months ago and didn't snapshot your deployment frequency, lead time, or change failure rate at that point, it's going to be hard to show what changed. If you haven't captured that baseline yet and still can, do it now.

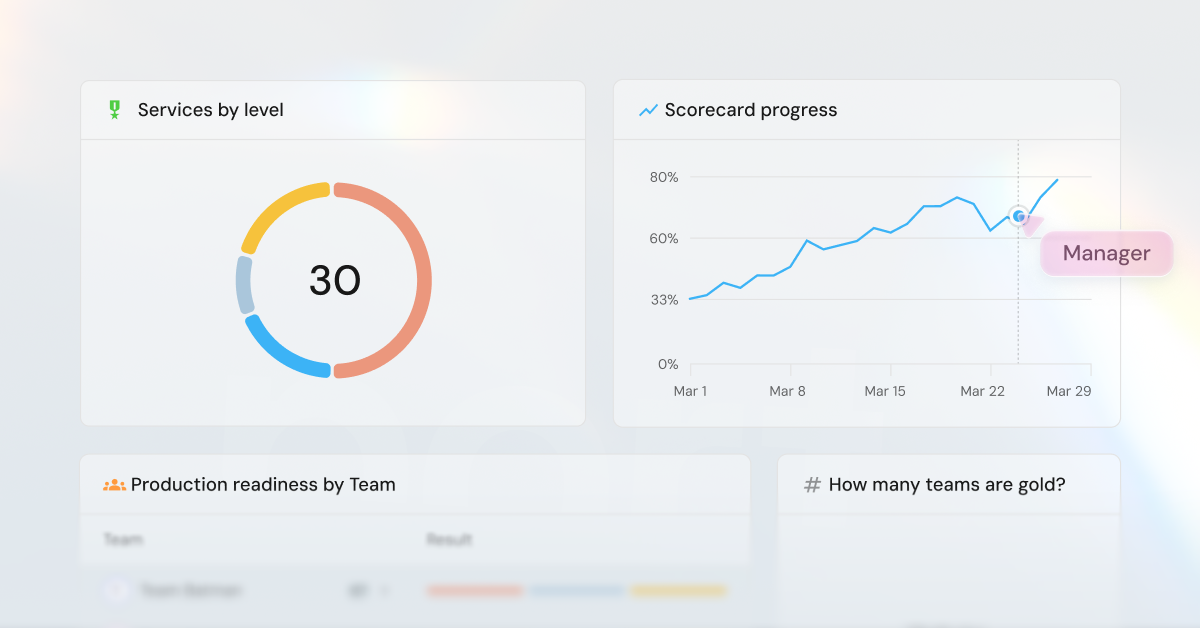

Is your dashboard connecting AI to engineering outcomes, or just tracking AI usage? If the headline metric is Copilot adoption rate or tokens generated, that's the same kind of number nine other companies already reported this quarter. The numbers that held up on earnings calls were resolution time, cost per unit, hours saved on a named workflow. Worth checking whether yours ties any of those to AI.

Can you name one workflow with a before and after? Say you rolled out AI coding tools in October. Your average PR cycle time before was 4 days. Now it's 2.5. That's one workflow, one before, one after. If you can draw that line for even one engineering process, you've made a connection most companies in this dataset haven't.

The 2025 DORA report found that AI amplifies whatever is already there. Teams with strong engineering platforms and observability get real gains. Teams without them get more instability. The foundation matters more than the tooling.

That's what we're building at Port. A platform that gives engineering teams the catalog, the baselines, and the visibility to connect AI adoption to what actually changed.

Get your survey template today

Download your survey template today

Free Roadmap planner for Platform Engineering teams

Set Clear Goals for Your Portal

Define Features and Milestones

Stay Aligned and Keep Moving Forward

Create your Roadmap

Free RFP template for Internal Developer Portal

Creating an RFP for an internal developer portal doesn’t have to be complex. Our template gives you a streamlined path to start strong and ensure you’re covering all the key details.

Get the RFP template

Leverage AI to generate optimized JQ commands

test them in real-time, and refine your approach instantly. This powerful tool lets you experiment, troubleshoot, and fine-tune your queries—taking your development workflow to the next level.

Explore now

Check out Port's pre-populated demo and see what it's all about.

No email required

.png)

Check out the 2025 State of Internal Developer Portals report

No email required

Minimize engineering chaos. Port serves as one central platform for all your needs.

Act on every part of your SDLC in Port.

Your team needs the right info at the right time. With Port's software catalog, they'll have it.

Learn more about Port's agentic engineering platform

Read the launch blog

Contact sales for a technical walkthrough of Port

Every team is different. Port lets you design a developer experience that truly fits your org.

As your org grows, so does complexity. Port scales your catalog, orchestration, and workflows seamlessly.

Port × n8n Boost AI Workflows with Context, Guardrails, and Control

Port Builders Session: A Single, Governed Interface for All MCP Servers

Book a demo right now to check out Port's developer portal yourself

Apply to join the Beta for Port's new Backstage plugin

n8n + Port templates you can use today

walkthrough of ready-to-use workflows you can clone

Further reading:

Learn more about Port’s Backstage plugin

%20Measure%20Dashboards%201%20(1).png)