Platform engineering in the age of AI

AI platform engineering can expand the capabilities of your AI agents the same way it does for your human devs. Learn how to go from tickets to prompts with AI.

If you’ve ever waited on approval for a new testing environment, you know what it means to be stuck: the thrill of working on a new feature quickly fades as you realize how many tickets you need to open. Maybe if the stars align you’ll get your testing environment in a week.

Platform engineering changes this, but AI really kicks that change up a notch. AI lets developers interact with platforms using natural language, which means they can now handle most of the heavy lifting that goes with software development. AI agents can write code, unit tests, and root cause analyses.

What used to be a frustrating, manual process has the potential to become a smooth, almost frictionless experience with AI. But what really makes this possible is the underlying platform the AI uses to complete tasks: a clear, concise platform for orchestration that treats AI agents and human developers as equal partners in the process of building software.

Port delivers on this orchestration model at scale, providing for AI the same way we have for human devs. In this post, we’ll talk about how to enable your AI agents smoothly and securely by applying platform engineering best practices.

What platform engineering can do for AI agents

Platform engineering is an evolution of DevOps practices. This is why, at Port, we know that if humans can talk to AI like they talk to each other, platform engineers will need to manage AI the same way they manage their human devs. This is where internal developer portals (IDPs) come in.

DevOps practices improved response time, sure, but developers didn’t feel much improvement or time savings at all because they still had to open a ticket or ask for help. The cognitive load of resource provisioning shifted to devs, who needed to explain what they wanted without really knowing what was possible.

Platform engineering emerged to bridge the gap between developers and infrastructure teams, using internal developer platforms and self-service portals. These orchestration layers gave platform engineers a concrete place and structure to build golden paths through their software development lifecycles, allowing devs to click buttons, fill out forms, and provision resources without opening tickets or waiting on DevOps for approval.

IDPs gave developers autonomy and a unified vision of their SDLC:

We’ve come to learn a few things at Port about this model:

- IDPs are only as useful as they are fast: Your platform builders have to translate the efficiencies of DevOps transformations into meaningful time and complexity savings for developers.

- The platform-as-a-product approach works: When you regularly add new features to your portal, support edge cases, and keep up with your devs’ demand for more complex self-service actions, you get a happier dev team and faster software development.

- Humans and AI agents need to work from the same context: If you’ve built a platform or portal already, this could mean exposing your existing actions and controls to AI; if not, you may want to consider AI use cases and build toward a human-in-the-loop approach when building out your new platform.

The only thing that has changed now is that your portals must include AI. The more developers rely on AI tools — and they’re using them, whether your organization has rolled any tools out officially or not — the more important it becomes that your platform team expands the portal’s capabilities to include AI as first-class users of the portal.

Microsoft recently discussed how AI agents have the potential to transform platform engineering, from dependency management to code quality standards. Platform teams are uniquely positioned to build trust and transparency into AI agents, training them on your unique domain context and gradually rolling them out into production. This is the future, not just for Microsoft, but for every company who leverages AI successfully to automate repetitive work and improve the overall development experience.

MCP x IDP: Adapting your portal for AI

Adding AI to your IDP isn’t just a cool UX upgrade. It’s a fundamental shift in how we build and ship software:

- AI helps developers stay in flow, reduce friction, and focus on what matters: solving problems and building products.

- AI helps platform teams scale operations without scaling headcount by letting machines handle the repetitive, reactive work in controlled environments.

- Organizations benefit from the speed and safety of a better developer experience, without compromising on control.

- AI, and the security teams worried about them, can reduce the agentic chaos that occurs when multiple AI agents (coding, testing, security, etc.) act independently, without central coordination, shared rules, or context.

Internal developer portals do shift infrastructure ownership left, but they don’t eliminate all friction. When a portal can’t handle a new use case, developers are back in Slack, asking for help, waiting for manual support, or requesting a new feature.

Orchestrating AI is just another use case platform engineers need to support in their portals. AI needs to be orchestrated and governed, similar to how developers have historically needed a lot of context to navigate their software development life cycle:

Portals are a massive leap forward, no doubt, but they still sit slightly outside the day-to-day flow of development. But if you’ve already built out some functionality in the portal, you’re a step ahead from everyone else in terms of adopting AI.

Here’s what we mean: Imagine a scenario where you're deep in the zone, iterating on a feature with Cursor. You have to test something now, which means you have two options:

- You can test it manually, submitting a ticket to get a testing environment from DevOps, writing your own tests, and asking Cursor to check your work. You hope for the best.

- You can direct Cursor to use Port’s MCP server to complete testing for you.

Option 2 is obviously faster. But it won’t really be more efficient if the tests fail, or Cursor fails to identify flaws you’ll need to fix otherwise. This is what Port’s MCP server can provide above others options.

Using Port’s remote MCP server

Port’s remote MCP server prevents any potential rework because it makes all of the self-service actions you’ve defined for developers accessible to AI agents. When you connect Cursor or any other AI tool to Port’s MCP server, the AI can see all of the tools you’ve built in Port and provided to your developers.

In our case above, Cursor can use Port’s MCP server to find the action that will let it create a dev env. It will also see what it must provide or do in order to perform the action seriously, such as branch details.

The MCP server gives AI agents a way to look up the necessary info to run the action, and returns a helpful, in-context, and more detailed response to the request to spin up a new environment, such as where it is and when it will expire.

Without the MCP server, the best response Cursor could give would be something like, “Go into Port and run the 'create dev env' action.”

Now, if you want to test something, all you need to do is chat to your AI agent pair programmer:

"Hey, spin up a new staging env for feature-payments, add Redis, and auto-delete it in 72 hours."

Seconds later, the AI agent will reply:

"Done. Here's your environment: [link]"

There’s no form to fill out, no ticket to submit, and no guesswork. You ask for what you need, and you get it.

This is the promise of AI agents, when thoughtfully controlled and guided by an internal developer portal. This is what the new normal could be: powered by AI agents, chat interfaces, and workflow engines that understand developer intent and translate it into infrastructure actions.

How MCP servers improve DevEx

As we mentioned, Port doesn’t just manage AI agents, it also empowers technical teams with the right experience to lead what they know best. Port serves as a control and orchestration layer that coordinates AI agents and human roles with clarity, context, and governance.

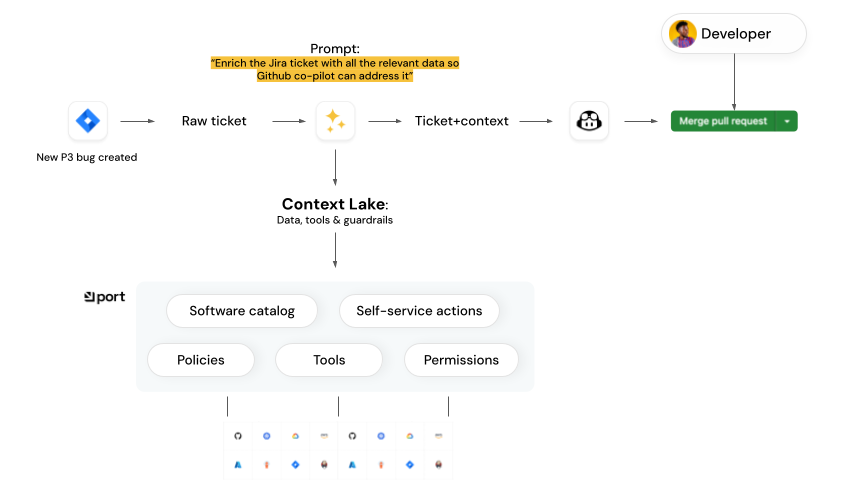

Port is helps you build a robust, agentic system for software delivery, including a context lake that is completely machine readable and searchable via the MCP server:

A context lake is a single conduit that agents use to:

- Get the data they need from a software catalog

- See the actions they can use, in the context of the specific task they perform

- View and maintain proper permissions and guardrails while acting autonomously

Port enables a human-in-the-loop approach to AI management. It keeps control in your team’s hands while agents accelerate everyday tasks. Every AI agent decision can be routed to the appropriate channels for review, approval, or revision and resubmission.

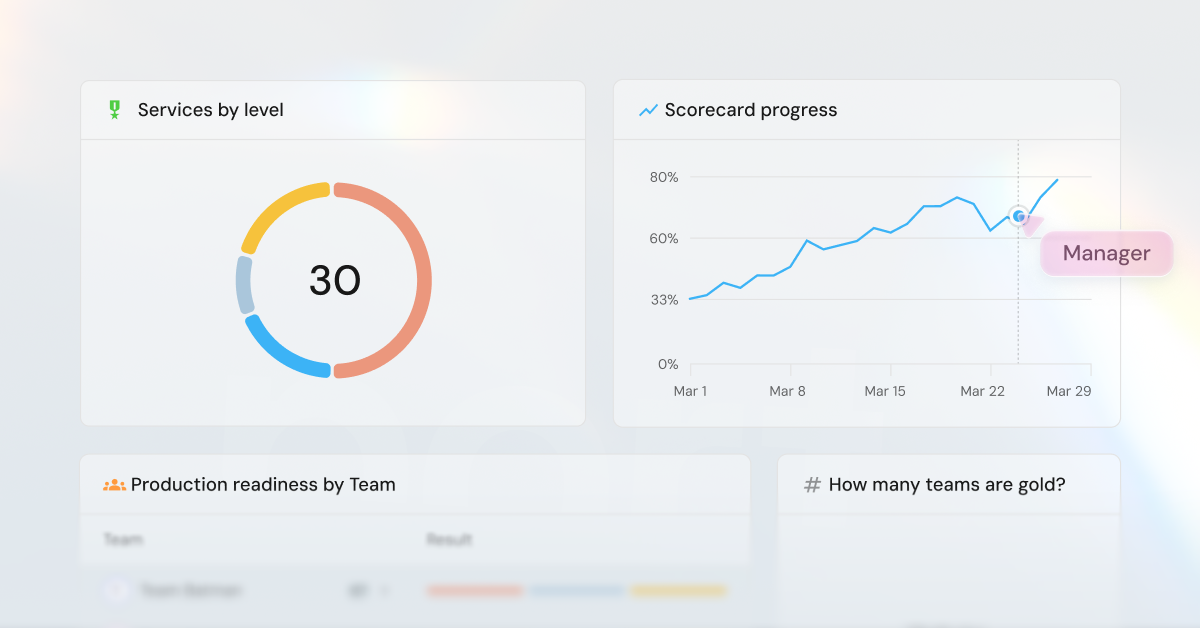

Better yet, your developers, SREs, and managers can track the status of any and all agent-driven tasks, from coding to workflows, pipelines, and incident response through a single interface that spans the full software lifecycle. This alignment keeps everyone informed.

There are also many developer experience improvements to be gained when your portal treats AI as first-class users:

- It’s fast: You get accurate, usable responses in seconds, not hours or days.

- It’s conversational: Just ask! No need to learn internal CLI tools or dig through docs.

- It’s context-aware: The bot knows your team, your repo, your service defaults. No prompt engineering or lengthy specs to write.

- You’re asked smart follow-ups: Forgot to mention a region or resource limit? The bot will ask (nicely).

- You can repeat it forever: Codify your actions into self-service tools, bookmark the interaction or save it to your chatbot’s memory, and reuse it later with one click.

- It feels easy: This is what DevOps ideals once promised us: the feeling that the system is finally working with you instead of against you.

People and agents can work together in harmony

Port meets teams where they’re already working, and integrates AI seamlessly:

- SREs can trigger agent actions (like spinning up cloud resources) directly from Cursor, and oversee automated CI fix attempts with clear success/fail indicators.

- Developers can ask Slack, “How many customers are impacted by this bug?”, consume the agent’s output through Jira tickets with contextual insights (e.g., affected microservice, recent deploy logs, test coverage), or receive AI suggestions in Slack channels as they code or triage.

- Managers get a real-time AI control center that provides a full view of agentic tasks, how often they’re completed, which agents are assigned to which task, etc. that can later be used to start new initiatives agents and engineers can address together.

You can see all of these features in action in our demo video, or take a stab at creating them yourself using our documentation guides.

Wrapping up

We’ve gone from submitting tickets to clicking buttons to simply asking for what we need. As a developer, you no longer need to know how the system works under the hood — you just need to describe what you want. With AI-powered platforms an emerging reality, the system figures out the rest.

Platform engineering needs to adapt to serve AI agents and users because they are — or shortly will become — essential parts of your SDLC. Port can help unify and organize your platform to make it easily accessible to AI agents alongside humans, and provide the context AI needs to perform up to par. Learn more about platform engineering and how developers' roles will change with AI.

Get your survey template today

Download your survey template today

Free Roadmap planner for Platform Engineering teams

Set Clear Goals for Your Portal

Define Features and Milestones

Stay Aligned and Keep Moving Forward

Create your Roadmap

Free RFP template for Internal Developer Portal

Creating an RFP for an internal developer portal doesn’t have to be complex. Our template gives you a streamlined path to start strong and ensure you’re covering all the key details.

Get the RFP template

Leverage AI to generate optimized JQ commands

test them in real-time, and refine your approach instantly. This powerful tool lets you experiment, troubleshoot, and fine-tune your queries—taking your development workflow to the next level.

Explore now

Check out Port's pre-populated demo and see what it's all about.

No email required

.png)

Check out the 2025 State of Internal Developer Portals report

No email required

Minimize engineering chaos. Port serves as one central platform for all your needs.

Act on every part of your SDLC in Port.

Your team needs the right info at the right time. With Port's software catalog, they'll have it.

Learn more about Port's agentic engineering platform

Read the launch blog

Contact sales for a technical walkthrough of Port

Every team is different. Port lets you design a developer experience that truly fits your org.

As your org grows, so does complexity. Port scales your catalog, orchestration, and workflows seamlessly.

Port × n8n Boost AI Workflows with Context, Guardrails, and Control

Port Builders Session: A Single, Governed Interface for All MCP Servers

Book a demo right now to check out Port's developer portal yourself

Apply to join the Beta for Port's new Backstage plugin

n8n + Port templates you can use today

walkthrough of ready-to-use workflows you can clone

Further reading:

Learn more about Port’s Backstage plugin